The New Org Chart Has No Humans in the Middle

Welcome to multi-agent orchestration, where specialized AIs manage other AIs, delegate to humans when they get stuck, and nobody called a meeting.

AI NEWSTECHNOLOGY

4/21/202612 min read

The New Org Chart Has No Humans in the Middle

Welcome to multi-agent orchestration, where specialized AIs manage other AIs, delegate to humans when they get stuck, and nobody calls a meeting.

In ten years, Nvidia plans to have 75,000 human employees and 7.5 million AI agents.

That is not a typo.

Jensen Huang said it on stage at GTC 2026. One hundred agents per person. His exact reassurance to the room: "Those 75,000 employees are going to be super busy."

The audience laughed. Nervously.

That was the appropriate response.

This is not a vision deck. It is a deployment plan from the company that makes the chips every other deployment plan runs on.

Welcome to Part 4.

Parts 1, 2, and 3 of this series covered the why and the when.

Why human cognitive labor is being economically repriced. When the substitution phases hit. What a post-work economy might look like if the institutions that design it don't collapse first.

Part 4 is the how.

Specifically: how a company actually does what every CFO in America is currently trying to figure out. How do you replace a department of thirty people with a coordinated system of AI agents that handles 80% of the work, faster and cheaper, with fewer passive-aggressive Slack messages and zero PTO requests?

The answer has a name.

It is called multi-agent orchestration.

And it is the machinery that runs beneath every data point in this series.

What Multi-Agent Orchestration Actually Is

(Because "AI doing stuff" is not a specification.)

A single AI model is a tool. A multi-agent orchestration is a workforce.

The chatbot you have been using is powerful but limited. It waits for input. It responds. It stops. It cannot plan a multi-step project, hand pieces off to specialists, monitor its own outputs, and course-correct without you holding its hand.

Multi-agent orchestration solves that.

Instead of one model doing everything, you get a network of specialized AI agents. Each one is built for a single task. An orchestrator on top assigns work, manages information flow, and routes outputs from one agent to the next.

The hierarchy looks like this:

A researcher agent gathers data

A writer agent drafts the report

A reviewer agent checks for errors

A compliance agent flags legal risk

A formatter agent outputs the final document

All of it runs while you sleep.

The numbers behind the noise

IBM research found that multi-agent orchestration reduces process handoffs by 45% and improves decision speed by 3x compared to single-agent approaches. Organizations using multi-agent architectures report 45% faster problem resolution and 60% more accurate outcomes.

That is not incremental improvement.

That is a restructuring of what a workflow looks like.

The AI agents market is currently at $5.25 billion. It is projected to reach $52.62 billion by 2030. A 46.3% compound annual growth rate. Multi-agent systems are the fastest-growing segment within that explosion.

Gartner clocked a 1,445% surge in enterprise inquiries about multi-agent AI between Q1 2024 and Q2 2025.

Not percent. Not double.

One thousand, four hundred, and forty-five percent.

That number does not appear solely because of hype.

The Org Chart Nobody Drew

(But everyone is quietly building.)

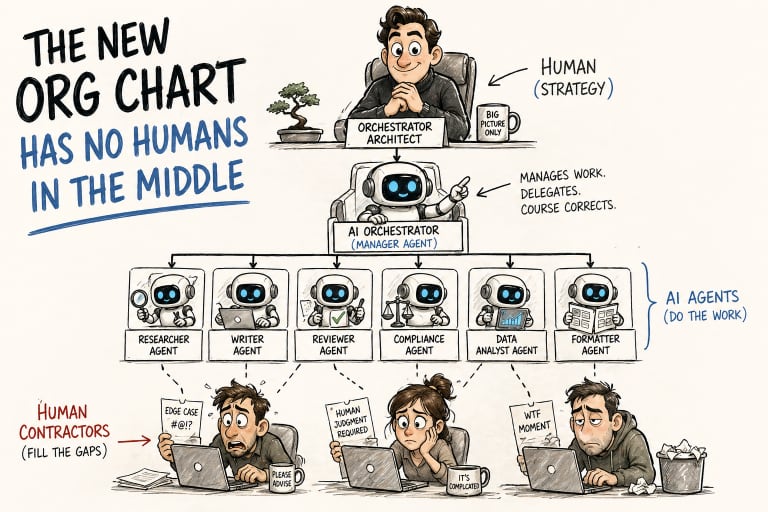

The new corporate structure has four tiers, and humans are not in the middle two.

The consulting industry does not yet have a PowerPoint slide for this. The org chart looks like this.

At the top: a single human, the orchestrator architect. They set objectives. Design the agent hierarchy. Define what counts as a good output. Own the business outcome.

Below them: an AI orchestrator. The manager agent. It breaks high-level goals into tasks, assigns them to specialized sub-agents, monitors progress, and redistributes work when something fails.

Below that: dozens, potentially hundreds, of specialized agents. Researchers. Writers. Coders. Legal reviewers. Data analysts. Each one is narrow, fast, and relentlessly on-task.

And at the bottom (here is the part nobody saw coming): humans.

Not as managers.

As contractors.

Filling the gaps that the agents can't handle. Verifying outputs that require accountability. Providing the tacit judgment that can't be encoded.

What people imagine: A controlled hierarchy of humans managing AI tools.

What actually exists: A hierarchy where humans bookend a stack run by AI. Architect at the top. Contractor at the bottom. Agents in between.

The solo-operator economy is already here

A January 2026 report found that 36.3% of all new global startups are now solo-founded. Founders use AI agent stacks to handle full-stack development, marketing, legal compliance, and customer support. Functions that previously required entire departments.

Three solo founders in Norway, Austria, and the U.S. are already pulling six-figure monthly revenues. Zero traditional employees. Agent orchestration is their entire operating model.

Sam Altman has predicted that the first billion-dollar solo startup will launch by 2028.

It is no longer a thought experiment.

It is a deadline.

The Plumbing Nobody Talks About

(The protocols make agents actually work together.)

Standardization happened in eighteen months. Most of you missed it.

For agents to coordinate, they need a shared language. Not metaphorically. Literally. Standardized protocols that let one agent hand off a task to another, access external tools, verify identity, and maintain audit trails.

In 2024, this was chaos. Every framework had its own conventions.

By early 2026, a coherent infrastructure layer has emerged:

MCP (Model Context Protocol). How individual agents connect to external tools (databases, APIs, file systems, browser access). The agent's nervous system reaches into the world. Over 10,000 active servers globally and 97 million monthly SDK downloads, with backing from OpenAI, Google DeepMind, Microsoft, and AWS.

A2A (Agent-to-Agent Protocol). How agents talk to each other. Task delegation, capability discovery, progress tracking. Over 100 enterprise partners. Now Google's reference architecture for multi-agent coordination.

ACP and UCP. Commercial transaction protocols. Agents that don't just analyze or communicate but actually buy things, negotiate prices, and execute procurement autonomously.

Translation: The plumbing for an autonomous economy where AI agents transact with other AI agents on behalf of humans (who may or may not be in the loop) is already shipping.

Google's 2026 Responsible AI Progress Report declared 2026 "the Era of Agentic Systems," distinguishing it from 2024 (building the foundation) and 2025 (the rise of the proactive partner). Google Cloud Next 2026 featured the Gemini Enterprise Agent Platform, infrastructure specifically designed to help businesses build and manage fleets of AI agents.

The plumbing is being laid.

The building codes are being written.

The question is, who is moving into the structure when it is complete?

The Part the Keynotes Skip

(Multi-agent orchestration is hard. The failure rate is brutal.)

Forty percent of enterprise agentic AI projects will be canceled by the end of 2027. That is not pessimism. That is Gartner.

Not paused.

Not delayed.

Canceled.

Enterprise adoption of multi-agent systems surged 327% in the first part of 2025. Hundreds of companies launched pilots, ran proofs of concept, and gave excited presentations to their boards.

Now the reckoning is coming.

The most common failure modes, in order of frequency:

Insufficient state management. Agents lose track of where they are in a workflow. (40% of failures.)

Poor agent handoff design. Tasks get dropped or duplicated at the boundary. (30% of issues.)

Hallucination cascade. One agent produces a confident but wrong output. The next agent treats it as a verified fact. By the final output, the error has propagated through the entire workflow.

Agent sprawl. Companies deploy dozens of one-off agents with no governance, no observability, and no one who actually owns them.

Over-engineering the scope. Trying to automate everything at once instead of finding the workflow that actually benefits from orchestration. (25% of delays.)

The hallucination cascade is particularly nasty.

A single-agent system produces one wrong answer. A human catches it. A chained multi-agent system takes that wrong answer, feeds it to the next agent, who adds confident elaboration, and then feeds it to the next agent. By the final output, the result looks completely authoritative and is completely wrong.

Reality check: The errors get prettier on the way out. Not more accurate.

Then the benchmark arrived

Mercor's APEX-Agents benchmark is the most realistic assessment of AI workplace capability conducted to date. It tested leading AI models against actual professional tasks from consulting, investment banking, and corporate law.

The results: even the best models completed fewer than 25% of tasks successfully in a single shot. With multiple retries, performance tops out at 40%.

What Musk says: AI can already replace half of all white-collar jobs.

What the benchmark shows: The top models can barely handle a quarter of the individual tasks inside those jobs. Let alone a fully integrated workflow.

That gap, between the keynote and the benchmark, is where most enterprise AI projects are currently dying.

What Actually Works

(And why it matters more than the vision.)

Multi-agent orchestration is not overhyped in the long term. It is just much narrower in the short term than the keynotes suggest.

The use cases generating measurable ROI in 2026 are narrow, well-structured, and high-volume:

Software development pipelines. One agent plans, one writes tests, one implements, one reviews. Cognition AI's Devin, an autonomous coding agent, is now generating 25% of its own company's internal pull requests. This is not a simulation. This is a product shipping code to itself.

Document processing and compliance. Invoice dispute resolution. Vendor onboarding. Legal contract review. Procurement validation. Workflows where agents gather evidence, apply policy, draft responses, and route approvals with strong audit trails. Exactly the shape of work that multi-agent systems handle well.

Customer service triage. Agentic systems handling high-volume, low-complexity inquiries while routing complex or emotionally loaded cases to humans. The Klarna lesson from Part 2: AI handles volume; humans handle the moments where people need to feel heard, not processed.

Financial operations. JPMorgan has lifted its AI-assisted productivity rate to 6%. Citigroup saw a 9% lift in coding output. Salesforce's CEO has claimed AI is already doing up to 50% of the company's workload. These are not benchmarks. These are production baselines.

The pattern is consistent.

Multi-agent orchestration works when the workflow can be decomposed into defined, verifiable steps. It struggles when success requires integrated multi-domain judgment, tacit knowledge, or the ability to detect that the question being asked is the wrong question.

Which is to say: the limit is not the agents' cleverness.

The limit is how cleanly the work can be broken down.

The New Employment Stack

(This is the inversion nobody wants to discuss out loud.)

AI agents are not just replacing humans in workflows. In an increasing number of architectures, they are managing them.

Assigning verification tasks. Routing quality control checks. Dispatching human contractors for the gaps the agents can't fill. Then, evaluate the quality of their work when they are done.

One framing has gained traction in technical communities, and it lands like a brick:

"The most agentic humans will run 100% of their businesses using agents. Those agents need humans for verification, validation, and filling in the gaps. All the other humans will be hired by these agents."

The new employment stack runs four layers deep. At the top, a small group of highly leveraged:

Architects - humans who design agent systems, set the objectives, and own the outcomes.

Orchestrators - AI manager agents that decompose goals, assign tasks, route outputs, and handle failures.

Executors - specialist AI agents doing the actual work: researching, writing, coding, analyzing, reviewing, formatting.

Validators - human contractors brought in to verify edge cases, provide accountable sign-off, and fill the judgment gaps the agents can't close.

The Architects are the new Tier 1 from Part 2. The AI Orchestrators. Except now we can be precise about what that title means.

You are not just "using AI."

You are designing and maintaining the hierarchy of agents that runs the business.

Microsoft has already rebranded its development suite to reflect this reality. "Microsoft Agent 365" is explicitly framed as a centralized control plane for managing "digital labor." The same oversight role was previously reserved for HR.

Translation: The unit of production is no longer the team. It is the workflow. And the architect of the workflow owns the leverage.

What This Means

(For the reader who is not a CTO.)

You are not insulated from this. You are downstream of it.

If you are not building AI agent systems, you are not safe from them.

Your job is probably not going to be replaced by a single AI model. But it may be getting replaced by an orchestrated system: a hierarchy of specialized agents that collectively handles 80% of what you do, leaving the remaining 20% to be picked up by one AI-enabled senior person who supervises the stack.

Sound familiar?

The counterintuitive part:

The most valuable skill in the next three years is not coding. It is not prompt engineering. It is the ability to decompose complex work into agent-executable steps. To look at a workflow and understand which pieces can be handed to specialized agents, at which checkpoints human judgment is genuinely necessary, and how to build the quality-control layer that catches errors before they cascade.

That is a design skill.

An architectural skill.

A systems skill.

It is not being taught anywhere in the current education system at meaningful scale.

Google's 2026 Responsible AI Report introduced the concept of a "buddy agent." A secondary agent that monitors primary agents in real time, ensuring they remain compliant during long multi-turn interactions.

Even the agents need supervisors now.

The question is whether that supervisor stays human or gets handed off to another agent.

For now, it is still often human.

For how long depends entirely on how quickly the trust problem is resolved.

Risks

(The bill is coming due. Most companies have not budgeted for it.)

The hallucination cascade is structural. It will not be fixed by a model update.

As long as agents chain outputs without independent verification, errors compound silently. Enterprises deploying agent systems in regulated industries (legal, financial, healthcare) are building accountability structures that do not yet align with their liability exposure.

Agent sprawl is already a governance crisis.

Companies that deployed point solutions in 2025 are now managing dozens of one-off agents with no unified observability, no consistent security model, and no end-to-end workflow owner. The cost of cleaning this up is not yet showing up in earnings calls.

It will.

The cancellation wave is coming.

Gartner's projection that 40% of agentic AI projects will be canceled by 2027 is not pessimistic. It is the historical failure rate of enterprise technology initiatives, applied to a technology being deployed at unprecedented speed. The companies that survive will be the ones that started narrow and built trust systematically. Not the ones that deployed ambitiously and explained failures retroactively.

The accountability gap is getting larger, not smaller.

Every orchestrated agent system needs a human to be legally responsible for its outputs. As systems scale and humans supervise thousands of agent interactions simultaneously, the quality of that oversight degrades.

Harvard Law identified the dynamic precisely: regulations keep mandating human sign-off while completely failing to grapple with whether those humans have the bandwidth for meaningful review.

Translation: The signature is real. The "actually reading the document" part is increasingly imaginary.

Concentration risk.

The infrastructure layer (MCP servers, A2A protocol implementations, orchestration platforms) is consolidating around a small number of platforms. Microsoft, Google, and OpenAI are each positioning themselves as the operating system for enterprise agent fleets.

The supply chain war for intelligence described in Part 2 is now playing out at the orchestration layer.

The entity that controls the orchestration layer controls what the agents can and can't do.

Opportunities

(For the people willing to read the spec instead of the headline.)

Orchestration design is a skills vacuum.

Most organizations have exactly zero people who genuinely own multi-agent workflow design. The ability to decompose business processes into agent-executable steps, design verification architecture, and build observability into the system is extraordinarily rare and extraordinarily valuable.

The window to become that person in your organization (before they hire from outside or bring in a specialized consultancy) is measured in months.

Trust infrastructure has a real business model.

Every enterprise deploying agent systems needs audit trails, compliance layers, output verification, and human-in-the-loop protocols. The EU AI Act mandates human oversight for high-risk systems starting August 2026.

The companies building credible governance infrastructure (not compliance theater, but actual trust architecture) have a moat no model update can erase.

The solo-operator model is real and accessible.

The one-person company powered by an agent stack is not theoretical. Three founders have already demonstrated it at scale in the first quarter of 2026. The required skills are within reach for someone who understands workflow design, has domain expertise, and is willing to build iteratively.

This is the highest-leverage individual position in the new economy.

Narrow vertical agent systems are a growth market.

General-purpose horizontal orchestration is hard and failure-prone. Narrow, industry-specific agent systems built for legal document review, clinical trial management, financial compliance, or supply chain exception handling have well-defined workflows, measurable outcomes, and buyers who understand the value.

This is where the immediate enterprise spending is going.

The verification layer is an employment category.

As agents scale, demand grows for humans who can accurately verify complex outputs. Lawyers reviewing AI-drafted contracts. Analysts auditing AI-generated financial models. Clinicians reviewing AI diagnostic recommendations.

Being the trusted verification expert in an agent-powered workflow is a better job than the generic version of that role was three years ago. Higher leverage. Higher accountability. Considerably harder to automate away.

The Closing Takeaway

Parts 1, 2, and 3 of this series made the case that the economy is being restructured. And that the restructuring is moving faster than the institutions designed to manage it.

Part 4 is the mechanism.

Multi-agent orchestration is not a future technology being hyped at conferences. It is the operational architecture currently absorbing the economic functions described in earlier parts of this series. The hiring freezes. The entry-level role collapses. The "productivity gains" in the quarterly reports.

These are what it looks like when companies replace human workflows with coordinated agent systems.

The system is messier than the keynotes suggest. The failure rate is significant. The governance infrastructure is being built in real time, under pressure, with inadequate tooling and no meaningful regulatory framework yet in place.

But the direction of travel is not ambiguous.

The question was never whether this was happening.

The question (still open, still consequential) is who holds the position of Architect when it does.

That position is not filled by waiting.

The org chart is being rewritten.

You can be in it. Or you can be replaced by it.

Pick.

This is Part 4 of The Last Economy series. Part 1 covered the cost delta and the end of the old employment contract. Part 2 mapped the 24-month transition timeline. Part 3 asked what humans do when work is optional. Part 4 is the mechanism. Part 5 will examine who is actually building the replacement: the founders, the governments, and the institutions racing to define the new contract before the old one finishes collapsing.