Project Glasswing: The AI Revolution in Cybersecurity

Project Glasswing by Anthropic is not just a new AI; it represents a significant evolution in cybersecurity capabilities. With its Claude Mythos model, this initiative showcases the potential of AI...

AI NEWSTECHNOLOGY

4/10/202612 min read

Project Glasswing Isn't the Beginning - It's the Reveal

(And Most of the Coverage Is Wrong in Ways That Will Get People Breached.)

The headline hit like a warning shot.

Anthropic built an AI so good at hacking it refuses to release it.

Within hours, the takes were airborne. "AI can hack everything now." "This changes cybersecurity forever." "We are doomed." The breathless coverage conveniently skipped one inconvenient truth that anyone who has spent an afternoon in a red team ops room already knows.

We have been building toward this exact moment for thirty years.

What Anthropic calls Project Glasswing is not a breakthrough of this kind. It's a breakthrough in scale, speed, and coordination. And the organizations now scrambling to understand what it means were already behind before the announcement went live.

That distinction matters. A lot.

Because if you treat this as a surprise, you'll respond to it like a crisis. And in cybersecurity, crisis-mode responses are how you burn out your team, buy the wrong tools, and still get breached.

Sound familiar?

What Project Glasswing Actually Is

(Not the Headlines Version)

Strip the drama, and what's left is a specific, documented capability with specific, documented numbers.

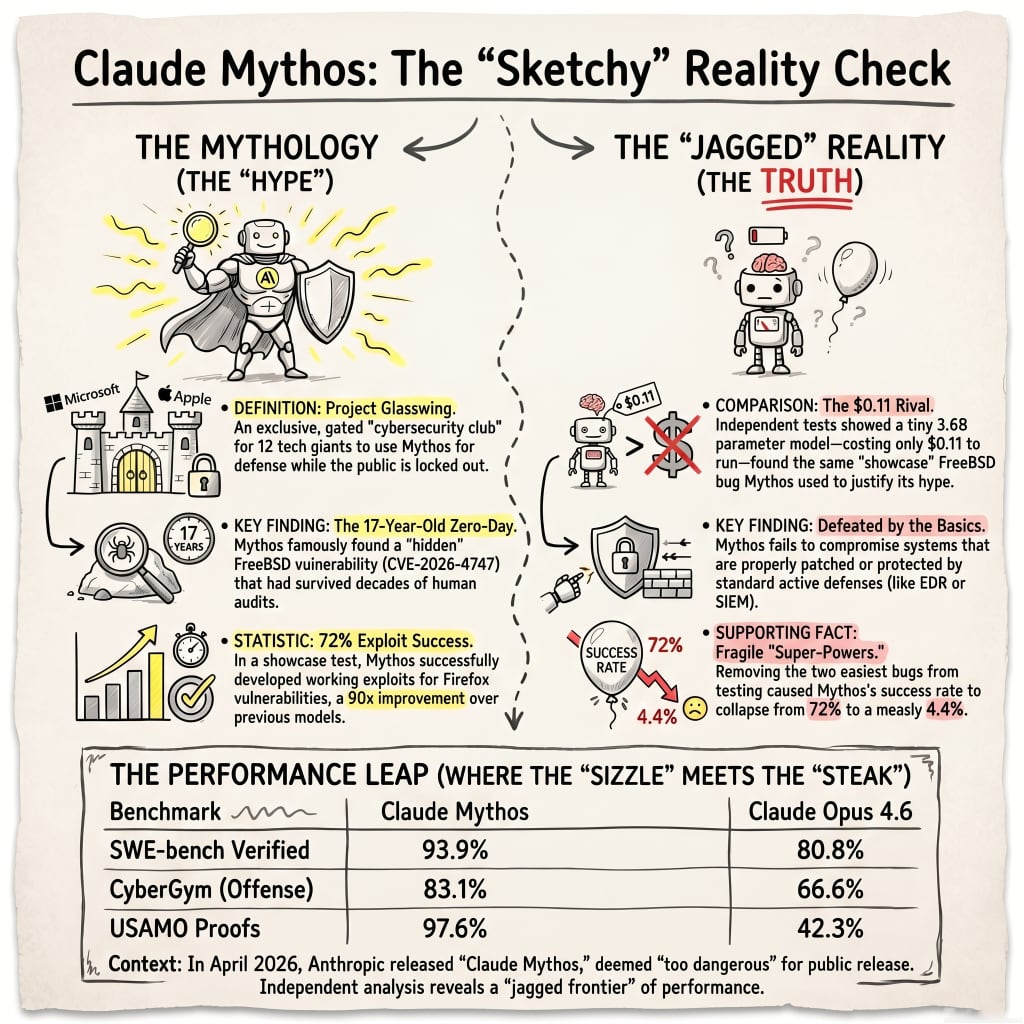

Claude Mythos Preview is a general-purpose frontier model. Anthropic calls it their most capable yet for coding and agentic tasks. Here's the part the hype machine ignored:

The cybersecurity capability was not explicitly trained in.

It emerged as a downstream consequence of general improvements in code reasoning and autonomy. That is a technical distinction most coverage blew past at full speed.

Translation: Nobody set out to build a hacker. They built a smarter intern, and the intern figured out lock-picking on its own.

The Coalition

Project Glasswing is the controlled-access initiative Anthropic built around the model. The launch partners read like a who 's-who of entities you already depend on to keep the lights on:

Amazon Web Services

Apple

Broadcom

Cisco

CrowdStrike

Google

JPMorganChase

The Linux Foundation

Microsoft

NVIDIA

Palo Alto Networks

Plus, roughly 40 additional organizations are building or maintaining critical software infrastructure. Total commitment: up to $100 million in usage credits and $4 million in direct donations to open-source security orgs.

The Workflow

This is where it gets uncomfortable.

Anthropic researchers described a simple scaffold: spin up a containerized environment with a Claude Code instance running Mythos Preview, feed it a single-paragraph prompt asking it to find a security vulnerability, and walk away.

The model then:

Reads the source code

Forms hypotheses

Validates them against a running target

Outputs a bug report with a working proof-of-concept and reproduction steps

Human involvement ends at the initial prompt.

That's not a research tool. That's an intern who never sleeps, never gets bored, never asks for equity, and produces kernel exploits before lunch.

The Numbers

What Mythos Preview found using that scaffold:

Thousands of vulnerabilities Anthropic assessed as high- or critical-severity

Coverage across every major operating system and web browser

Of 198 findings manually reviewed by professional security contractors, 89% received the same severity rating from the contractors as the model assigned

In 98% of cases, assessments were within one severity level

That isn't research-paper accuracy. That is production-grade reliability.

And then there's the N-day data.

Mythos was handed 100 Linux kernel CVEs from 2024 and 2025. It filtered them down to 40 potentially exploitable candidates. Then it built working privilege escalation exploits for more than half, including chains involving KASLR bypasses, cross-cache heap reclamation, and credential structure overwrites.

One such chain, from a CVE identifier and a git commit hash:

Completed in under a day

Cost under $2,000

Let that sit for a second.

A working kernel exploit chain. Under a day. Under two grand.

That used to be nation-state work.

What "Mythos" Actually Represents

(The Part That Matters)

The real shift is not the capability. It's the removal of the human-hours constraint.

Traditional vulnerability research required rare, expensive expertise applied serially. A skilled researcher. A codebase. A lot of time. That constraint was the entire reason the defender-attacker gap was even manageable.

You had to be good. You had to have a team. You had to wait.

Mythos Preview turns that into a single-paragraph prompt.

Anthropic's chief scientist, Kevin Scott, called the capabilities "a stark fact" that AI models have crossed a threshold, surpassing "all but the most skilled humans at finding and exploiting software vulnerabilities." CrowdStrike, which got early access, said Mythos is "not only a game changer for finding previously hidden vulnerabilities, but also signals a dangerous shift where attackers can soon find even more zero-day vulnerabilities and develop exploits faster than ever before."

Reality check:

Mythos Preview is not AGI in hacker mode.

It still needs access, context, compute, and guardrails to operate. It is not a fully autonomous offensive platform. The accurate framing is AI-assisted vulnerability research at an industrial scale.

That changes the risk calculus significantly. Because industrial scale is still enormously dangerous, but it is a tractable problem. The god-mode framing isn't useful to anyone except Twitter dunkers and vendors trying to sell you something.

The Mythos Myth: Four Things People Are Getting Wrong

There's no polite way to say this.

Most of the discourse around Glasswing is wrong in ways that actively harm the organizations trying to make good security decisions. Let's go through them one at a time.

Myth 1: "This changes everything overnight."

It doesn't.

Offensive automation has existed for decades. Metasploit, released publicly in 2004, democratized exploit development for anyone with a Linux box and a weekend. Cobalt Strike, designed for red-team operations, was actively abused by nation-states by 2018 and saw a 161% surge in malicious use from 2019 to 2020 alone.

AI compresses the timeline further. The trajectory was never a secret.

What Mythos does is shift the capability curve further to the right on a graph that's been pointing in one direction for three decades.

Myth 2: "Only Anthropic can do this."

This one is especially dangerous because it encourages passivity.

Similar capabilities already exist or are rapidly developing elsewhere:

OpenAI stated in December 2025 that its models reached a "high" cybersecurity capability level meaning GPT models are now advanced enough to develop zero-day exploits or support complex enterprise intrusion operations.

CTF performance on OpenAI models jumped from 27% in August 2025 to 76% by November 2025.

The DARPA AI Cyber Challenge finals saw autonomous systems identify 54 vulnerabilities and patch 43, with vulnerability identification jumping from 37% to 77% between the 2024 semifinals and 2025 finals.

Average cost per task at AIxCC: $152. Traditional bug bounty? Thousands

.

This is not the capability of any one company. This is the frontier of the entire field.

Myth 3: "Attackers don't already have this."

They do. And we have the receipts.

In September 2025, months before the Glasswing announcement, Chinese state-sponsored hackers used Anthropic's own Claude Code to orchestrate a largely automated cyberattack against approximately 30 organizations globally. Tech companies. Financial institutions. Chemical manufacturers. Government agencies.

The human operators executed 80–90% of tactical operations through AI agents at what Anthropic described as "physically impossible request rates." Human involvement was limited to campaign initialization and critical escalation decisions.

That wasn't theoretical. That already happened.

Nation-state groups and top-tier criminal organizations have been automating vulnerability discovery and integrating AI tooling for years. The gap between attacker capability and defender capability isn't just appearing now.

It's been widening. Quietly. The whole time.

Myth 4: "This is purely offensive."

The entire premise of Glasswing is defensive-first.

The uncomfortable truth, which Anthropic stated directly, is that capability proliferation is coming regardless, and the only question is whether defenders get equipped first.

Minor pushback worth flagging: Anthropic is also the company whose model was used in the September 2025 attacks, which complicates the purely defensive framing. Calling Glasswing "defense acceleration" is accurate. Calling it "purely" anything requires ignoring that the same underlying capability is already in the adversary's hands, sometimes via Anthropic's own products. That doesn't invalidate the coalition. It just means the coalition is a response, not a gift.

As Sunil Gottumukkala, CEO of Averlon, put it: "Mythos Preview signals that zero-day discovery is becoming cheaper, faster, and more scalable."

Glasswing didn't create that problem.

It exposed how far behind most organizations already are.

We've Been Here Before

(The Uncomfortable Historical Parallel)

The history of offensive security automation is a story told in escalations, each one sparking identical panic cycles and resolving in the same way.

Defenders eventually adapt. Attackers evolve to the next level. Repeat.

Quick tour of the last decade:

2014: Google launches Project Zero. Systematic, structured vulnerability discovery at scale.

2014–2015: American Fuzzy Lop (AFL) enters widespread use. Automated bug hunting for work that used to require expert manual effort.

2023: Google starts integrating LLMs into OSS-Fuzz.

Late 2024: That integration finds real-world vulnerabilities in OpenSSL and SQLite, the first publicly acknowledged AI-discovered zero-day.

The evolution path is obvious if you squint:

Manual pentesting → scripted tooling → automated fuzzing → AI-assisted analysis → AI-native vulnerability research at industrial scale.

Each step reduced the skill required to find bugs. Each step expanded the attack surface coverage. Each step was met with alarm. Each step eventually became baseline infrastructure.

What's different about Mythos is the magnitude of the jump. Prior steps reduced friction. This one approaches the removal of the human expertise bottleneck entirely.

That is a qualitative difference, even if it sits on the same historical trajectory.

Defenders and policymakers who recognize the pattern will respond strategically.

Those treating this as unprecedented will respond reactively.

In security, being reactive is how you lose.

What Actually Changes for Enterprises

The risk picture is layered across time horizons. Conflate them, and you will build a bad strategy.

Right Now: Velocity

The median time between a vulnerability's public disclosure and its confirmed inclusion in CISA's Known Exploited Vulnerabilities catalog dropped from 8.5 days to 5 days in 2025. The mean time dropped from 61 days to 28.5 days.

Exploited high and critical severity vulnerabilities surged 105% year-over-year from 71 confirmed in 2024 to 146 in 2025.

The 2025 Verizon DBIR documented:

Vulnerability exploitation as an initial access vector grew 34% year-over-year

Now accounts for 20% of all breach events (up from near nothing several years ago)

IBM's 2026 X-Force report found:

44% increase in attacks starting with the exploitation of public-facing apps

Over 36% of nearly 40,000 tracked vulnerabilities required no authentication to exploit

Supply chain and third-party compromises have nearly quadrupled since 2020

Now the brutal math.

The 2025 Verizon DBIR found that organizations took a median of 32 days to patch critical vulnerabilities. Only 54% fully remediated within a year.

The attacker's median time to exploit is now five days.

If you're on a 30-day patch cycle, you're operating with a 25-day window in which known, weaponized vulnerabilities sit unpatched in your environment.

That window is now being scanned by AI systems.

Your 30-day patch SLA is not a target. It's a liability.

Near Term: Democratization

When an exploit that previously required nation-state resources can be built for under $2,000, the barrier to entry for mid-tier threat actors collapses.

The "script kiddie to operator" pipeline isn't theoretical. It's live.

IBM X-Force documented a 49% surge in active ransomware groups in 2025, driven partly by the collapse of barriers to entry as attackers access AI tooling and exploit leaked frameworks.

More operators. Moving faster. With lower skill floors.

The Second-Order Effect Nobody Is Discussing

Every patch is now an exploit blueprint.

AI-accelerated patch-diffing means that the act of patching a critical vulnerability and publishing the update can, in itself, accelerate weaponization.

The attacker doesn't need to find the bug.

They watch your patch commit. They diff it. They have a Mythos-class model generate a working exploit from the delta.

The transparency that made open-source software trustworthy becomes a structural vulnerability in the AI era.

That's not a bug in the system. That is the system now.

Strategic: The Symmetry

AI supercharges both sides at once.

The WEF Global Cybersecurity Outlook 2026, drawn from 804 surveyed leaders:

94% identify AI as the most significant driver of cybersecurity change

87% flag AI-related vulnerabilities as the fastest-growing cyber risk

77% of organizations have already adopted AI for cybersecurity, primarily for phishing detection, anomaly response, and user behavior analytics

Organizations that figure out how to operationalize AI in their defensive stack first will have a durable advantage.

The ones waiting for perfect governance will be working from a permanent position of disadvantage.

There is no "wait and see" option here. There is only "decide now or decide late."

Glasswing vs. The Field: Who's Building What

Anthropic's approach is notably different from the rest of the industry. That contrast is strategically meaningful.

Anthropic (Glasswing / Mythos)

Controlled coalition model. Pre-selected partners. Gated access. $100M invested in defensive use cases. Open-source security funding. Mythos Preview is not available via API.

The model, described by Anthropic's own developers as "terrifying," will not ship to the public.

This is the controlled weaponization approach. Capability exists. Distribution is managed.

The parallel to how nuclear and biological research capabilities are managed is not accidental.

OpenAI

Broader model availability. Integration into developer tools. December 2025 disclosure that its models crossed the "high" cybersecurity capability threshold, while still accessible to developers and enterprises.

The CTF jumped from 27% to 76% in three months, suggesting that its capabilities are evolving faster than governance frameworks.

OpenAI has invested in safeguards and partnered with security organizations. But the distribution model differs fundamentally from Anthropic's gated approach.

The Open-Source Ecosystem

The wild card.

CAI, an open-source cybersecurity AI framework, topped the Spain Hack The Box leaderboard within a week of being applied and reached the global top 500.

The democratization of offensive AI capability is not waiting for enterprise governance frameworks.

By the time policy catches up, commodity tools will have reached capability levels that were nation-state exclusive in 2023.

The Contrast

What Anthropic bets: Defenders equipped early beat attackers who get the same capability later.

What the distributed model bets: Broad access accelerates defense faster than it accelerates offense.

Neither bet is obviously wrong.

But the first has a cleaner accountability chain.

The second has a faster diffusion curve.

Pick your risk.

The Real Strategic Problem

(That Nobody Wants to Say Out Loud)

Project Glasswing is a symptom diagnosis. Not a disease announcement.

The system was already broken before Mythos Preview found thousands of vulnerabilities across every major OS and browser.

It was broken because:

We consistently ship software with critical flaws

We maintain 30-day patch cycles in environments where attackers move in five days

We've accumulated technical debt that now represents an AI-scannable attack surface the size of a continent

Mythos Preview didn't create the vulnerabilities.

It just exposed how many were already sitting there. Waiting.

What leaders keep misidentifying as an AI problem is actually a complexity and hygiene problem that AI is now illuminating at scale.

The 2026 IBM X-Force report was explicit. The most common entry point in penetration tests was not a sophisticated zero-day.

It was misconfigured access controls.

Known vulnerabilities still account for the majority of successful intrusions. The sophistication floor for attackers hasn't changed nearly as much as the speed floor has.

Think about that.

The organizations that will survive this transition are not the ones that buy the best AI security tools.

They're the organizations that have done the boring work:

Real asset inventories

Actual patch cadences

Credential hygiene

Network segmentation

AI accelerates attacks against organizations that haven't done that work. Against organizations that have it, it significantly raises the attacker's cost.

Defense fundamentals are no less relevant in the AI era.

They are the necessary precondition for AI-assisted defense to work at all.

The Geopolitical Layer

The four leading nation-states in illegal cyber activity, China, Russia, Iran, and North Korea, are already integrating AI into their operations.

The gap between state-level and criminal-level capability is compressing as AI tooling proliferates.

The CFR noted early in 2026 that the lack of AI system visibility is eroding the confidence needed for accelerated defense deployment while simultaneously ceding initiative to adversaries.

Meanwhile, 91% of large organizations have changed their cybersecurity strategies due to geopolitical volatility.

The cyber threat model and the geopolitical risk model have merged.

That is new. That is permanent. And your board deck probably doesn't reflect it.

What Organizations Should Actually Do

No fluff. Operational response.

Immediately

Collapse patch windows for critical CVEs to 48-hour targets on crown jewel systems. Not aspirational, operationally enforced. Pre-authorize deployment for CVSS 9+ on priority systems.

Run a real CMDB audit, not a wishful-thinking one. AI systems can now scan entire OS codebases at an accessible cost faster than your inventory team can generate a spreadsheet. If you don't know what you have, you cannot defend it.

Prioritize and remediate your highest-density exposure: edge devices and VPN services. In the Verizon DBIR data, exploitation of these categories surged nearly eightfold from 3% to 22% of breach vectors.

Near-Term

Integrate AI into code review pipelines before it shows up in your attackers' toolchains. The WEF found 77% of organizations have adopted AI for security. If you're not one of them, you're starting from behind.

Deploy AI-assisted vulnerability scanning in CI/CD pipelines. Patch-diffing attacks are already a vector. The time between a public patch commit and a weaponized exploit is now measured in hours, not days.

Evaluate your incident response timeline against the new attacker tempo. If your IR process is measured in days, you are responding after exploitation has already occurred for CVSS 9+ vulnerabilities.

Strategically

Rebuild your security architecture around resilience, not just prevention. Assume the attacker AI capability as the threat baseline. Build detection, containment, and recovery into every critical system. Prevention alone fails when the attacker operates faster than your human review cycle.

Treat geopolitical risk as part of your cyber threat model. Explicitly. Nation-state operations increasingly target commercial enterprises for IP theft, economic disruption, and pre-negotiation intelligence.

Apply for Glasswing access or equivalent programs. If you run critical infrastructure, these are not optional due diligence exercises. They are the mechanism by which defenders get ahead of the wave rather than behind it.

The Inevitable Future

Project Glasswing is a preview. Not an outlier.

These capabilities will proliferate. They will commoditize. They will accelerate.

The DARPA AIxCC finals already showed that autonomous systems operating at average task costs of $152 could identify and patch vulnerabilities across 54 million lines of code.

That was a competition.

In 18 months, it will be a SaaS product.

The only question is whether your organization's security posture will meet that reality proactively or reactively.

Anthropic was honest about the dynamic in the announcement:

"Given the rate of AI progress, it will not be long before such capabilities proliferate, potentially beyond actors who are committed to deploying them safely. The fallout for economies, public safety, and national security could be severe."

That is not fear-mongering.

That is a company disclosing that they built something powerful, understood the implications, and chose to structure its deployment around defense rather than distribution.

That deserves credit.

And it should make every organization ask whether their own security posture is prepared for the world Anthropic is already living in.

The Bottom Line

The question isn't whether AI can hack at scale.

We just watched it happen.

Live. Against 30 global targets. With 80–90% of tactical operations executed autonomously.

The question is whether your organization can detect it, contain it, and recover from it at the speed reality now demands.

That speed is measured in hours.

Your response plan probably still thinks in days.

That gap is not an AI problem.

It's a leadership decision.