Claude Cowork: Your AI Chief of Staff

Discover Claude Cowork, the innovative AI developed by Anthropic that streamlines your workflow and automates tasks. Learn how this powerful digital colleague transforms productivity for knowledge ...

AI NEWSTECHNOLOGY

4/26/202612 min read

Claude Cowork & Claude Code: The Thinking Chief of Staff

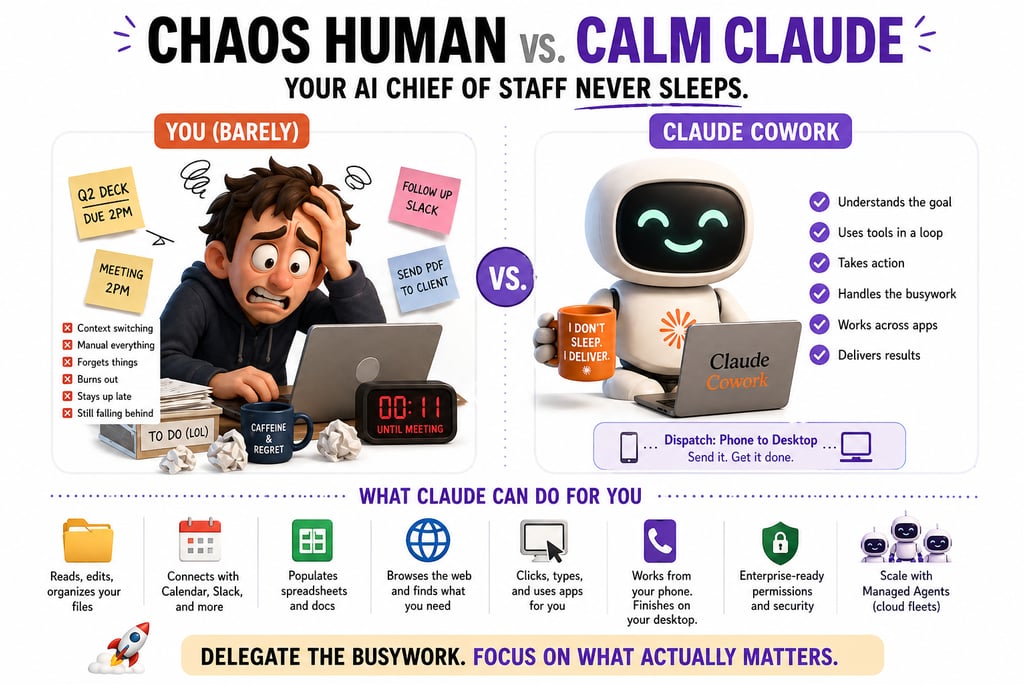

Anthropic built a digital colleague that runs tasks on your computer, takes orders from your phone, and was itself built in a week and a half using AI. Here's what's real, what's limited, and what it actually takes to make it work.

Part 2 of the AI Chief of Staff Series | 3XNL.ai

The Moment It Clicked

You are running late. A meeting starts in eleven minutes.

The presentation is on your desktop, not in the calendar invite where the client expects it. You grab your phone, fire off a quick message to Claude: "Export the Q2 deck as a PDF and attach it to today's 2pm client meeting."

By the time you walk into the building, it is done.

Not "here are the steps to do that yourself." Not "I found a tutorial." Not "I'm unable to access your local files."

Done.

That is Claude Cowork. And if you are still thinking of Claude as a fancy search engine with better grammar, you are about three product cycles behind.

That's not a rhetorical flourish. Three actual product cycles. We'll get there.

What Just Shipped (And Why the Timeline Should Bother You)

The pace of what Anthropic has shipped in the first quarter of 2026 is genuinely disorienting.

Let's establish the record.

January 12, 2026. Anthropic launched Claude Cowork, a dedicated desktop application (Mac-first) that lets you read, edit, and organize files directly on your machine. Not a browser tab. Not a cloud widget. A local agent with access to your actual files.

March 17, 2026. Dispatch launched inside Cowork. A research preview that pairs your phone with your desktop. One persistent conversation thread across devices. You send a task from your phone. Your desktop executes it. You come back to finish work.

April 8, 2026. Claude Managed Agents hit public beta cloud-hosted infrastructure for enterprises to deploy fleets of Claude agents at scale. Sandboxed execution. Multi-agent coordination. Scoped permissions. Automatic error recovery. Priced at $0.08 per runtime hour.

Three major releases in less than ninety days.

The detail that should not slide past you: the team that built Cowork built it in roughly a week and a half using Claude Code itself.

Stop for a moment and sit with that.

A team of engineers used the previous version of their AI tool to build the next version. In ten days. The recursion is doing real work in that sentence.

Cowork vs. Code: Two Tools, Two Completely Different Philosophies

These are not upgrades of each other. Treating them as a ladder you climb is the first mistake people make.

Claude AI, Claude Code, and Claude Cowork are different tools built for different environments, users, and work.

Here is the honest breakdown:

Who it's for. Cowork is built for knowledge workers and non-technical users. Code is built for developers and engineers. If you have never touched a terminal, Code is not your starting point. It is the deep end of the pool, and the deep end is full of git commands.

Environment. Cowork runs as a desktop GUI application. Code lives in the terminal and CLI. That distinction shapes everything downstream.

Control model. With Cowork, you define the outcome, and Claude figures out execution. With Code, you direct every step. More control. More responsibility. Higher ceiling.

Memory. Cowork uses project-level briefing documents. Code uses persistent CLAUDE.md files with auto-memory that survive across sessions and cascade to every future task that references them.

File access. Both tools access and edit files. Cowork handles local files and documents. Code adds full codebase context.

Phone control. Cowork supports phone-to-desktop control via Dispatch. Code does not.

Token efficiency. Cowork burns more context screenshots and image processing adds up fast. Code is significantly more efficient. Budget accordingly. Or discover the difference at the end of the month, in the only language LLM platforms speak fluently: invoice.

Reliability on complexity. Cowork performs well on simple to mid-complexity tasks. It stalls on high-ambiguity, multi-step workflows. Code runs more reliably because you can catch and correct errors in real time.

The key philosophical split: Cowork is task delegation. Code is command-driven execution.

With Cowork, you describe the outcome once and step away. Claude decides how to get there. With Code, you are in the cockpit the whole time.

Neither is better. One is right for your situation. Know which one before you start.

People who skip this step end up using a desktop GUI to do work that needs a terminal, or a terminal to do work that needs a GUI. They report the same outcome: it sort of works, mostly, until it doesn't.

What "Agentic" Actually Means Here

(Not the Buzzword Version. The Real One.)

The word "agentic" has been sanded smooth by marketing departments. Let's be precise.

Anthropic's own definition: models using tools in a loop. Given a task, the agent autonomously chooses tools, updates its decisions based on the tools' outputs, and iterates until the task is complete.

In practice, for Cowork, that means Claude can open applications on your computer, browse the web, populate spreadsheets, manage files and folders, connect with Google Calendar, Slack, and other integrations, and fall back to simulating keyboard and mouse actions when no native integration exists.

Read that last clause again. Simulating keyboard and mouse actions. Your AI is, in the literal sense, watching your screen and pretending to be you. That's not a metaphor. That's the architecture.

For Claude Code, the toolkit expands further: running bash commands, modifying code across multiple files simultaneously, executing Python scripts, spinning up subagents to handle parallel tasks, and, as this part bears repeating, writing approximately 90% of Claude Code's own codebase.

That last one is where the mind starts bending.

The 90% Number Nobody Is Processing Correctly

In early 2026, Anthropic CEO Dario Amodei confirmed that roughly 70–90% of code shipped across Anthropic is now written by AI.

Boris Cherny, the lead engineer on Claude Code, said his personal figure is 100%. He has not written code by hand in over two months. "I shipped 22 pull requests yesterday and 27 the day before, each one 100% written by Claude," Cherny wrote.

Let that land properly.

This is not a claim about the future. This is Anthropic's present-tense operational reality. The people who built the tool are already using it to replace their own knowledge work.

Two reads on this. You need both.

The healthy read: AI is amplifying skilled humans, not replacing them. Cherny still decides what to build, reviews outputs, and directs architecture. The 90% refers to generated code after human direction, not self-directed AI doing whatever it wants.

The uncomfortable read: if the most technically sophisticated AI team in the world has already flipped to near-complete AI-generated output, what does that imply about the timeline for the rest of us?

That is not a rhetorical question.

It is, in fact, the question. The one nobody on the keynote stage answers in plain English. They mumble something about "augmentation" and move on to the next slide.

You should not move on to the next slide.

Building the Real Chief of Staff: What the System Actually Needs

Most people fail at this part. Not because the AI is bad. Because they gave it a persona without giving it a system.

They spin up Claude Cowork, assign it a vague role "be my Chief of Staff," and then wonder why it feels like a slightly smarter to-do list.

Here's the problem.

A real Chief of Staff, human or AI, does five things a chatbot never will:

Manages priorities, not just tasks

Tracks commitments over time, not just in the current session

Follows up without being asked

Pushes back when priorities conflict

Creates clarity from chaos, not just summaries of it

Claude is capable of all five. Capability and configuration are different things. You have to build the architecture.

If you don't, what you have is a very articulate parrot with desktop access. Which is nothing, but it is not a Chief of Staff.

Memory Layer

This is where Cowork and Code diverge most practically.

In Code, you build persistent CLAUDE.md instruction files that survive across sessions. Every update to that file cascades to every future task that references it. In Cowork, each Project has a master briefing document, one briefing, every task, every time.

Neither is perfect. Both require intentional setup.

Real-world proof this works: a PayPal financial crime investigator built a Claude agent that reads his emails, Teams chats, and calendar every Monday. It applies the Eisenhower Matrix to prioritize, then builds a recursive context file asking questions about people and projects it doesn't recognize, so it learns over time.

His words: "I start each week with a new kind of clarity."

That's not a demo. That's a functioning system someone took the time to design.

It's also, importantly, the kind of system most people will not take the time to design. Which is why most people will not have that kind of clarity. The tools are democratic. The discipline is not.

Task System

What needs doing, in what order, at what level of urgency? This does not exist by default. You create it.

This sounds obvious. Almost nobody does it.

Decision Rules

What Claude should escalate versus what it should execute on its own. Without this, you will either micromanage it or wake up to surprises. Neither is the goal.

Surprises, in particular, are not the goal. Surprises in agentic systems tend to involve emails. Sent ones. To people who weren't supposed to receive them.

Execution Layer

The actual integrations: calendar, email, files, Notion, Slack. Claude Cowork handles many of these natively. Claude Code can reach further with custom MCP connections.

Feedback Loop

What worked, what failed, what to update. Without this, your system plateaus. With it, it compounds.

The delta between those two outcomes, measured over six months, is significant.

The delta between those two outcomes, measured over 18 months, is the difference between an org that runs on AI and one that ran a pilot once and never quite recovered.

Dispatch: Small Feature, Massive Implication

Dispatch is technically a minor update. Practically, it is a preview of something much larger.

Before Dispatch, your Claude Cowork session was tethered to your desk. Now your phone is a remote control for your desktop AI. One persistent conversation thread. Your message from your phone. Claude executes on your computer. Files stay local. User approval is required before any action.

The immediate use cases seem modest: trigger an automation you forgot to schedule, grab a file while you're across town, check on a running task during a commute.

But zoom out.

What Dispatch is actually demonstrating is a continuous agent relationship, one that does not reset when you close the laptop. The same context. The same ongoing work. Accessible from wherever you are.

That is not a productivity feature. That is a fundamentally different category of relationship with software.

The kind that, in five years, makes "I had to switch devices" sound the way "I had to dial in" sounds today.

Forbes framed it correctly: Claude is advancing from a chat interface to "a more integrated operational layer for daily tasks."

That framing is not hype. It is an accurate description of the architecture.

Managed Agents: The Enterprise Layer

Claude Cowork is for individuals and knowledge workers. Claude Managed Agents is Anthropic's enterprise play, and it signals where all of this is heading.

Launched on April 8, 2026, Managed Agents provides any company building on the Claude Platform with the full production stack for deploying AI agents at scale. You define what agents should do, what tools they have access to, and what guardrails apply. Anthropic handles the rest: sandboxed execution, long-running sessions, multi-agent coordination, credential management, and end-to-end tracing.

At $0.08 per runtime hour, the pricing is accessible.

Read "accessible" with caution. At 1,000 agent-hours a month, that's $80. At 100,000 agent-hours a month, which is the point of multi-agent coordination, that's $8,000. And nobody who deploys this carefully models 100,000 hours before they get there.

(They model 1,000. Then they grow. Then they get a bill.)

The 2026 State of AI Agents report is direct about where the industry is: agents are no longer experimental. They are in production. The limiting factors now are integration, security, and operational scalability, not model capability.

One case study from Anthropic's 2026 Agentic Coding Trends Report: a company achieved 89% AI adoption across its entire organization with 800+ AI agents deployed internally. Design teams used Claude Artifacts to prototype during customer interviews in real time; concepts that would normally take weeks were turned around on the spot.

Reality check: The infrastructure question, the three to six months of engineering work just to make an agent production-ready, is no longer the barrier it was. The gap between prototype and production is closing fast. Organizations that treat this as a 2027 problem are already behind.

By 2027, "already behind" will be a polite way of describing them.

The Risks Nobody Mentions in the Launch Video

The opportunities are real. So, here are the ways this can go wrong.

The ambiguity trap. Cowork's most common failure mode is vague instructions. If you cannot articulate the outcome clearly, the agent cannot execute reliably. This is a human skill gap, not a model bug. Most people don't know they have it until the third failed automation. By the fourth, they're blaming the tool. By the fifth, they're tweeting about how AI is overhyped.

Token quota shock. Cowork burns context fast, especially with Computer Use enabled. Screenshots and image processing add up quickly. PCWorld discovered this the hard way when Dispatch triggered an automation they did not expect. Budget for it before it surprises you.

Or don't, and discover what "I had no idea it could cost this much" sounds like out of your own mouth.

Security surface. Granting an AI agent access to local files, browser sessions, and app integrations creates a real attack surface. Anthropic has built-in safeguards against prompt injection, and Dispatch requires user approval before any action, but the risk does not disappear. It relocates.

The question is not whether to trust the AI. It is whether your systems are hardened against someone who would exploit it.

(Spoiler: they aren't. Almost nobody's are. This is the InfoSec story of 2026, and most of you reading this are part of it.)

The "sounds right" problem. Claude Code can produce code that is syntactically correct and architecturally wrong. Cowork can complete a task that technically fulfills the instruction while missing the intent entirely. Both require review. Neither replaces judgment.

A code review at 11 PM is not a code review.

Reliability cliff on complexity. Cowork performs well on simple to mid-complexity tasks. It stalls on high-ambiguity, multi-step workflows. Knowing where that cliff is before you delegate something mission-critical is not optional.

It feels optional. It is not.

The Opportunities Worth Moving on Now

The compounding advantage is real, and it's already diverging between early movers and everyone else.

Teams building well-structured Cowork systems and Claude Code workflows are now building institutional knowledge that accumulates. Every improvement to a CLAUDE.md file or a project briefing document makes every future task better.

That gap widens every month.

The non-technical unlock. Cowork is the first genuinely agentic AI tool that does not require engineering skills to deploy. Knowledge workers who spent their careers avoiding terminal windows are now building autonomous workflows. That changes who gets to participate in this shift, and by how much.

This is also a quiet career risk for the people who don't participate. The "I'll learn it when I need to" crowd is about to learn it in a hurry, on someone else's timeline.

Multi-agent systems are no longer theoretical. Managed Agents makes it feasible for mid-size organizations to run coordinated fleets of specialized agents, one for research, one for drafting, and one for scheduling, with a supervisor agent coordinating them. This is not a roadmap item. It is the current Anthropic operational model.

The Dispatch model as precedent. Continuous, cross-device AI relationships will normalize. The companies and individuals who build those relationships now with proper context files, feedback loops, and decision rules will have agents that know them.

Everyone else will keep starting over from a blank context window.

Forever.

What This Actually Means

The shift Cowork and Code represent is not "AI got better at answering questions." It is that AI crossed the line from responding to acting.

For individuals, the gap between "I should do this" and "this is done" has just collapsed. The cognitive overhead of context-switching, follow-up, and administrative grind, the stuff that quietly eats three hours out of every workday, is now delegable.

Three hours a day. Compounded over a quarter. The math is unkind to the people who refuse to engage with it.

For teams: the architecture of work itself is changing. Not because AI is replacing roles, but because the overhead that once justified those roles is evaporating. What survives is the judgment layer: strategy, relationships, decisions that require context only a human carries.

For businesses: Managed Agents signals that the infrastructure question has been largely eliminated. The gap between prototype and production is closing fast. The organizations that understand this in 2026 will not be explaining it to competitors in 2027. They'll be operating at a level competitors will struggle to reverse-engineer.

By the time competitors notice, the lead is already a year old.

The Honest Closing Line

The series started with OpenAI's Workspace Agents: fast, deployable, excellent at execution, limited in depth.

Claude Cowork and Claude Code are the thinking layer. Slower to set up. Harder to master. When configured correctly genuinely more capable of the kind of work that actually matters.

Anthropic made it possible to build a Chief of Staff that knows you, grows with you, and acts on your behalf without waiting to be asked.

They did not make it easy.

That gap between possible and easy is where most people give up.

Don't be like most people.

Part 2 of the AI Chief of Staff series. Part 1 covered OpenAI Workspace Agents the fast-moving executor. Part 3 dismantles OpenClaw and the persona-vs-system trap. Part 4 closes with multi-agent orchestration, where 40% of pilots fail within six months. The full series lives at 3XNL.ai.