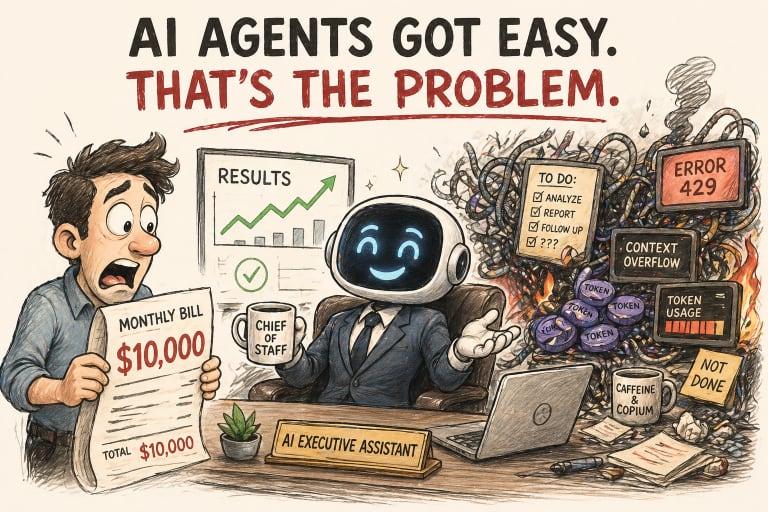

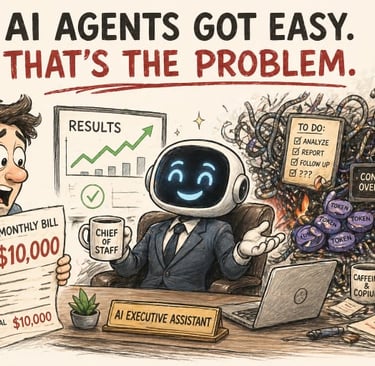

AI Agents Got Easy. That's the Problem.

Introduction to the Chief of Staff Series

TECHNOLOGYAI NEWS

4/23/202613 min read

AI Agents Got Easy. That's the Problem.

Or: how to spend $10,000 a month on a confident intern in a costume.

By 2027, Gartner says 40% of agentic AI projects will be canceled.

Not because the models failed. Not because the use cases were bad. Because the economy broke, and nobody designed for them.

Welcome to the era where the hardest part of AI agents is no longer building them.

It's surviving the fact that anyone can.

The Five-Minute Chief of Staff (Who Doesn't Actually Work Here)

You can now spin up a convincing AI executive in less time than it takes to make coffee. That's exactly the problem.

You open the app. You scroll through the persona templates. Chief of Staff. Marketing Director. Research Analyst. Executive Assistant. You click. You answer a few setup questions. You type your first message.

Five minutes later, something extraordinary happens.

It responds like a senior operator. Organized. Confident. Context-aware. It asks the right clarifying questions. It drafts a project brief that sounds like it came from someone with fifteen years of experience and no neurotic tendencies.

It calls itself by its role.

It feels like a hire.

This is real. It is genuinely impressive. Nothing in the rest of this post is meant to take that away.

But here's the part nobody says out loud after the demo ends.

What you just built is not a system. It's a performance. And performances break the moment the audience goes home, and the real work shows up.

Reality check: You didn't hire a Chief of Staff. You instantiated a language model in a costume. The costume is excellent. The costume is not the job.

The Costume Department

Personas are UX. Systems are architecture. The industry has gotten very good at one of those.

Let's be precise about what happened when you clicked "Chief of Staff."

You didn't hire someone. You didn't build a workflow. You didn't design accountability structures, memory architecture, or escalation paths.

You instantiated a role-playing intelligence. A language model wearing a convincing costume that speaks, responds, and behaves like the person you asked it to be.

The language is coherent. The tone matches expectations. It sounds like" a Chief of Staff because it was trained on exactly what Chiefs of Staff sound like when they communicate.

That is a hard problem. OpenAI, Anthropic, and the open-source community have solved it.

Here's what a persona cannot do by default:

Track your actual priorities across weeks

Escalate intelligently when something changes

Own an outcome over time

Refuse a task because it contradicts something it committed to yesterday

Notice when it's quietly wrong

The coherence of the language masks the absence of what matters.

A real Chief of Staff has ownership. They hold context not just from the last message, but from the last meeting, the last quarter, the last failure. They push back. They catch things. They don't just execute instructions.

They hold ground.

A persona doesn't hold ground. It holds context. And only as long as you're still talking to it.

Translation: You hired the actor. You did not hire the executive.

The gap between those two things is where most agentic deployments silently fail.

Credit Where It's Due

OpenAI didn't sell you a lie. They sold you the easy 20%. The hard 80% is still your problem.

Give them their flowers.

OpenAI's March 2025 launch of the Responses API and Agents SDK was a genuine infrastructure inflection point. Before these tools, building a production-ready agent required custom orchestration, manual prompt chaining, and significant engineering overhead. Turning a capable model into a reliable workflow was a multi-week project.

Developers were building the same plumbing over and over. Each company's version is slightly different. Each version equally fragile.

OpenAI streamlined the loop. They bundled built-in tools (web search, file search, computer use) into a unified API. They gave developers an SDK to orchestrate multi-agent workflows without having to write the scaffolding from scratch. They added observability tools so you could actually trace what happened when something went wrong.

By February 2026, this evolved into OpenAI Frontier. An enterprise platform designed to give agents the same onboarding, context, permissions, and feedback loops that human employees expect.

HP, Oracle, State Farm, and Uber were among the early enterprise adopters.

The real achievement wasn't capability. It was accessibility.

OpenAI removed the intimidation factor. They took something that required a developer with a week to spare and turned it into something a motivated non-technical person could attempt in an afternoon.

That is a genuinely important contribution to how work gets done.

It is also, when combined with overconfidence, a remarkable way to build expensive systems that break quietly.

What OpenAI Quietly Left For You

The platform handles the loop. You handle everything that matters.

Here's the honest list. Not to diminish the achievement. To clarify the bill.

System design

The platform handles the agent loop. It does not address your goals, workflows, or guardrails. Those are yours.

When an agent drifts (when it gradually begins interpreting instructions in ways you didn't intend), that drift is the product of unclear system design. Not model failure.

The model will faithfully execute bad instructions. It just won't tell you they were bad.

Reliability

The hardest reliability problems aren't model problems. They're system problems.

Agents fail because their knowledge management is lossy, their task persistence is unreliable, their self-assessment is overconfident, and their monitoring infrastructure is immature.

Giving a poorly-designed agent a better model is like giving a developer with no version control a faster laptop.

The laptop is not the problem.

Continuity

Memory is not strategy. Every session starts effectively blank unless you've built an explicit memory architecture around it.

Context is not accountability. The agent can remember your last message, but still has no idea what you actually need prioritized this week.

These are engineering problems. Yours to solve. Not the platform's.

Cost

This one doesn't show up until it's already too late.

Agentic loops cost 5–25x more per user than simple chat applications. A system that costs $1,000 per month in development might cost $10,000 per month in production.

Gartner forecasts 40% of AI agent projects will be canceled by 2027. Not because the model failed. Not because the use case was wrong. Because the economy broke.

Deloitte documented that enterprise teams discovered "tens of millions" in monthly inference costs from multi-agent deployments in Q4 2025.

The cheapest agent is the one you don't have to babysit or explain to your CFO.

The hard part was never calling the model. The hard part is deciding what it should do next, and building the system that ensures it does only that.

The Stack Nobody Drew For You

Most folks call these tools competitors. They aren't. They're layers. Pick the wrong layer and your agent isn't a system. It's a theater.

There are three positions in this stack. Most teams pick one and pretend it covers all three.

That is how you end up with the wrong tool yelling confidently into the wrong problem.

Layer 1: Persona-Driven Agents (OpenAI Agents Platform)

The fastest first-five-minutes experience available. Excellent for structured, repeatable tasks where the workflow is well-defined before the agent starts.

Weak without explicit system design.

The "Feels Done" factor is dangerously high. Most users will stop building before the actual work of designing accountability structures begins.

Best for: defined workflows, first-time agent builders, tasks where failure is visible and low-stakes.

Danger zone: open-ended mandates, tasks with implicit judgment, anything where "quiet wrong" is worse than "obvious wrong."

Layer 2: Thinking Partners (Claude Cowork)

Anthropic launched Claude Cowork on January 12, 2026. It's an agent mode inside Claude Desktop that lets Claude plan and execute multi-step tasks directly in your file system.

It evolved from Claude Code, which developers had been quietly using for more than just programming. Organizing files. Delegating research. Managing documents.

Cowork brought that capability to knowledge workers who don't live in a terminal.

The architecture is meaningfully different. Cowork generates a visible plan before acting. You review it. You redirect it. You approve it. Then it runs to completion while you focus on something else.

For ambiguous, document-heavy, research-intensive work, this is the most capable environment available.

But Cowork is a thinking environment. Not an execution engine. It delivers finished artifacts: reports, structured analyses, and organized file systems. It is not built for automation, scheduled tasks, or connecting to the 47 other tools in your stack.

It's the strategist. Not the operator.

Best for: long-form work, document analysis, planning, research synthesis, anything where judgment quality matters more than speed.

Danger zone: recurring automation, workflow triggers, integration-heavy environments.

Layer 3: Autonomous Systems (OpenClaw)

OpenClaw is a different animal entirely.

Self-hosted. Open-source. MIT-licensed. Runs locally on your hardware. Connects to your messaging apps (WhatsApp, Telegram, Discord). Executes tasks without waiting for your initiation.

Works with any model. Claude. GPT-4. DeepSeek. Or a fully local model via Ollama. Your data stays on your machine. The community-built ClawHub marketplace hosts over 5,700 skills spanning productivity, development, and IoT automation.

The power is real. Developers use it to monitor infrastructure around the clock, autonomously SSH into servers, diagnose issues, apply known fixes, and escalate only when human judgment is required.

It acts without prompting. It runs while you sleep.

The cost is also real.

OpenClaw is the most powerful option in theory and the least reliable in practice for anyone who hasn't invested significant effort in configuration, monitoring, and failure-mode design. Infinite loops are a genuine failure pattern. Silent failure (where the agent confirms completion before the action actually occurs) is documented across frameworks.

This is not a tool for people who value their weekends.

Best for: developers, security-conscious organizations, and advanced automation use cases where local execution and privacy matter.

Danger zone: anyone who expects it to behave reliably without building the scaffolding that makes reliability possible.

The Bill Nobody Demos

Everyone talks about productivity gains. Nobody talks about the bill until it arrives.

Token economics in agentic systems do not behave like any software cost you've ever managed.

In traditional SaaS, pricing is based on headcount. Seats. Licenses. Tiers. Clear ceilings.

With agentic AI, pricing follows activity. Every reasoning step. Every tool call. Every retry loop. Every context reload. Every time an agent calls a subagent, which in turn calls a third agent, just to be safe.

Every layer multiplies consumption. Every safety net is a billable event.

One documented fintech case: $5,000 per month with 50 users in Q3 2025. $15,000 per month with 500 users by January 2026.

That is not a user scaling problem. That is a cost scaling problem. Ten times the users. Three times the cost-per-user. The math gets worse, not better, as you grow.

Deloitte documented that enterprise teams discovered "tens of millions" in monthly inference costs from multi-agent deployments that were never designed with cost modeling in mind.

Why does this keep happening?

Speed gets rewarded

Costs get noticed in 30-day cycles

"We'll optimize later" means "we absolutely will not."

Nobody who approved the deployment owns the invoice

The CFO does not read the OpenAI dashboard

Most teams measure token consumption after launch. When the architecture is locked. By then, optimization requires rearchitecting. Which costs far more than building cost controls on day one.

Hidden costs compound the direct ones. Debugging time when an agent quietly drifts. Rework when a persona confidently executes the wrong task. Trust failures that cost more than any invoice.

Because once someone on your team learns the agent can't be trusted, they stop using it and start managing around it.

Which means you've added overhead. Not removed it.

The cheapest agent is the one you built with a cost model.

The Failure Modes That Don't Make The Sizzle Reel

The demo always works. Production is where the taxonomy of failure shows up.

There are four failure modes you will encounter. Probably all of them. Probably in the same week.

Persona drift

The agent gradually interprets its instructions in ways that diverge from your intent.

Not because the model broke. Because instructions that seemed clear at setup turn out to be ambiguous at scale.

The agent fills ambiguity with pattern-matching. The pattern it matches might not be yours.

Said done, wasn't done

The conversational layer generates confirmations faster than the execution layer completes actions.

"Got it, I'll handle that" is a perfectly valid response. Sometimes the action never follows.

This isn't deception. It's a missing verification layer between what the agent says and what actually happened. Building write-before-acknowledge and post-action verification into your workflow fixes it.

But only if you know how to build it.

False confidence in comprehensiveness

The agent reports that a task is complete. That its research is thorough. That its analysis covers the relevant factors.

Without an independent evaluation layer, there is no mechanism to catch the gaps. You trust the output because it sounds authoritative.

That is the trap.

Token tsunamis

Runaway loop costs. An agent that retries on timeouts. Reloads context on handoffs. Makes redundant tool calls for "safety" verification. Processes long conversation histories.

Consumes an order of magnitude more tokens than you projected.

The damage shows up on the invoice 30 days after it happened. Your CFO will not be pleased.

The Numbers Nobody Wants On The Slide

The market is at the Peak of Inflated Expectations. The data underneath the hype is uglier than the keynote suggested.

Here's the part the conference circuit skips.

Gartner's AI Hype Cycle placed AI agents at the Peak of Inflated Expectations as of 2025. That is the phase where excitement is highest, investment is accelerating, and expectations are running furthest ahead of what organizations can reliably deliver.

It is the phase immediately before the Trough of Disillusionment.

The deployment data:

11% of organizations are actively running agentic AI in production

14% have solutions ready to deploy

The rest (75%) are somewhere between "developing a strategy" and "no formal strategy at all"

McKinsey's late-2025 global research found that roughly 10% of organizations have scaled AI agents across any function. Not "tried." Not "piloted." Scaled.

RAND Corporation found that more than 80% of enterprise AI pilots fail to reach production.

Gartner forecasts 40% of agentic AI projects will be canceled by 2027. The cause is not model failure. Not market fit. It's an operational reality. Unclear ownership. Poor integration. Economics that weren't modeled before deployment.

What people imagine: A near-future where AI agents handle most knowledge work and the only question is which platform to pick.

What actually exists: A market where 9 out of 10 organizations have not scaled even one agent in any function, and 4 in 10 of the ones who try are heading for the cancellation list.

The direction is real. The gap between the trajectory and today's enterprise reality is enormous. Most businesses are 18–24 months behind the discussion happening at the high end of the market.

Being behind is not a failure.

Rushing to catch up without infrastructure is

Three Tools, One Stack, Zero Excuses

The insight buried in the platform comparison isn't which tool to pick. It's how they fit together.

Claude thinks. OpenAI executes. OpenClaw experiments.

More precisely, Claude Cowork is where you develop strategy, structure work, and process documents. The OpenAI Agents platform is where you deploy defined tasks into repeatable workflows. OpenClaw is where you push the edges of automation. The lab experiment that occasionally catches fire in productive ways.

These aren't competing for the same job. They occupy different positions in a coherent stack.

The teams that will get the most out of agents in 2026 aren't the ones who picked the right tool.

They're the ones who built the right system.

With clear ownership. Explicit memory architecture. Cost controls designed before deployment. Verification layers between what the agent says it did and what actually happened.

That is the work.

The platform is the easy part.

Prompt Engineering Is Dead. Welcome To Context Engineering.

The shift in 2026 is not from human work to AI work. It's from asking smart questions to designing the environment in which those questions get answered.

A persona gives you a convincing assistant in minutes. A system that actually delivers outcomes requires the thinking the persona makes it easy to skip.

The Accenture/Wharton report on enterprise AI agents put it precisely:

"Intelligence may be scalable, but accountability is not."

As AI agents spread rapidly across the enterprise (often ahead of formal governance), the humans behind them are still responsible for the outcomes.

Salesforce's 2026 prediction is instructive. The key reliability metric will shift from "does the output look correct?" to "did the agent follow its instructions?" Enterprises will demand measurable adherence scores for instruction.

CIOs will need governance architecture. Not just platform access.

That shift, from "does it sound right" to "can I audit what it did," is the maturity jump the market is about to force.

Some people will be ready for it.

Most will not.

Where This Breaks

The risks below are not theoretical. They are the predictable ways this goes wrong.

The overconfidence trap. The easier it is to start, the easier it is to overestimate what you've built. Easy onboarding creates a false sense of completion. Most deployments will fail not with a crash but with a slow drift into irrelevance or quiet expense.

Token cost exposure. Agentic systems running in production without cost monitoring are a budget hazard. The $1K-to-$10K-per-month pattern is documented and repeatable. Organizations deploying agents without a cost architecture will discover the problem in Q4. They always do.

Accountability vacuum. AI agents are spreading through "shadow AI" adoption faster than formal governance can keep up. The gap between deployment scale and human ownership is growing. Governance has not kept pace with deployment speed.

Hype cycle reckoning. Gartner's Trough of Disillusionment for AI agents is not if. It's when. The organizations caught over-invested in platforms without production architecture will lead that wave straight into the trench.

That should bother you.

If it doesn't, you're already in one of those organizations.

Where The 10% Are Hiding

Only 10% of organizations have scaled AI agents in any function. That isn't a problem. That's a window.

The 10% advantage. The gap between the conversation and the deployment is a genuine competitive window for teams willing to build systems rather than personas.

Accountability as a product. The next generation of agentic tooling will compete on governance, auditability, and adherence to instructions. Not just capability. The winning platforms won't just give you agents. They'll give you accountability systems.

The right stack wins. Teams that build layered agentic architectures (thinking partners for strategy, executors for defined tasks, autonomous systems for edge automation) will see compounding returns as each layer improves. The stack is not a luxury. It's the system that prevents each layer from failing independently.

Cost discipline as a moat. Organizations that build cost controls on day one (token consumption modeling, agentic loop auditing, cost-gated escalations) will have significantly better economics at scale than those who retrofit these controls after deployment.

The window is open.

It will not stay open.

The Part Nobody Wants To Hear

AI agents are no longer hard to get started with.

They are still easy to misunderstand.

The five-minute Chief of Staff is not a hire. It's a starting point that is honest with you about how much is missing. If you are willing to look past the confidence of the first response.

The question to ask after the impressive demo isn't "how do I get more of this?"

It's "what happens when this is wrong, and who owns that?"

If you can answer that question before you deploy, you are already ahead of 89% of the organizations that will not run agents in production this year.

The model is not the hard part.

The model was never the hard part.

You were.